Does inference have an answer to your hallucination problem?

Yes, and the strategy is called Test Time Compute Scaling.

The last three years were dominated by the race to scale model training. Bigger datasets on larger, more GPUs drove the leap from GPT-3 to GPT-4, from early LLaMAs to Gemini, from “just text” to multimodal reasoning. That era gave us the raw capability.

But once a model is trained, where is its value truly realized?

Not in the datacenter that produced it, but in the billions of inferences it serves daily. This is where the real frontier lies: inference compute.

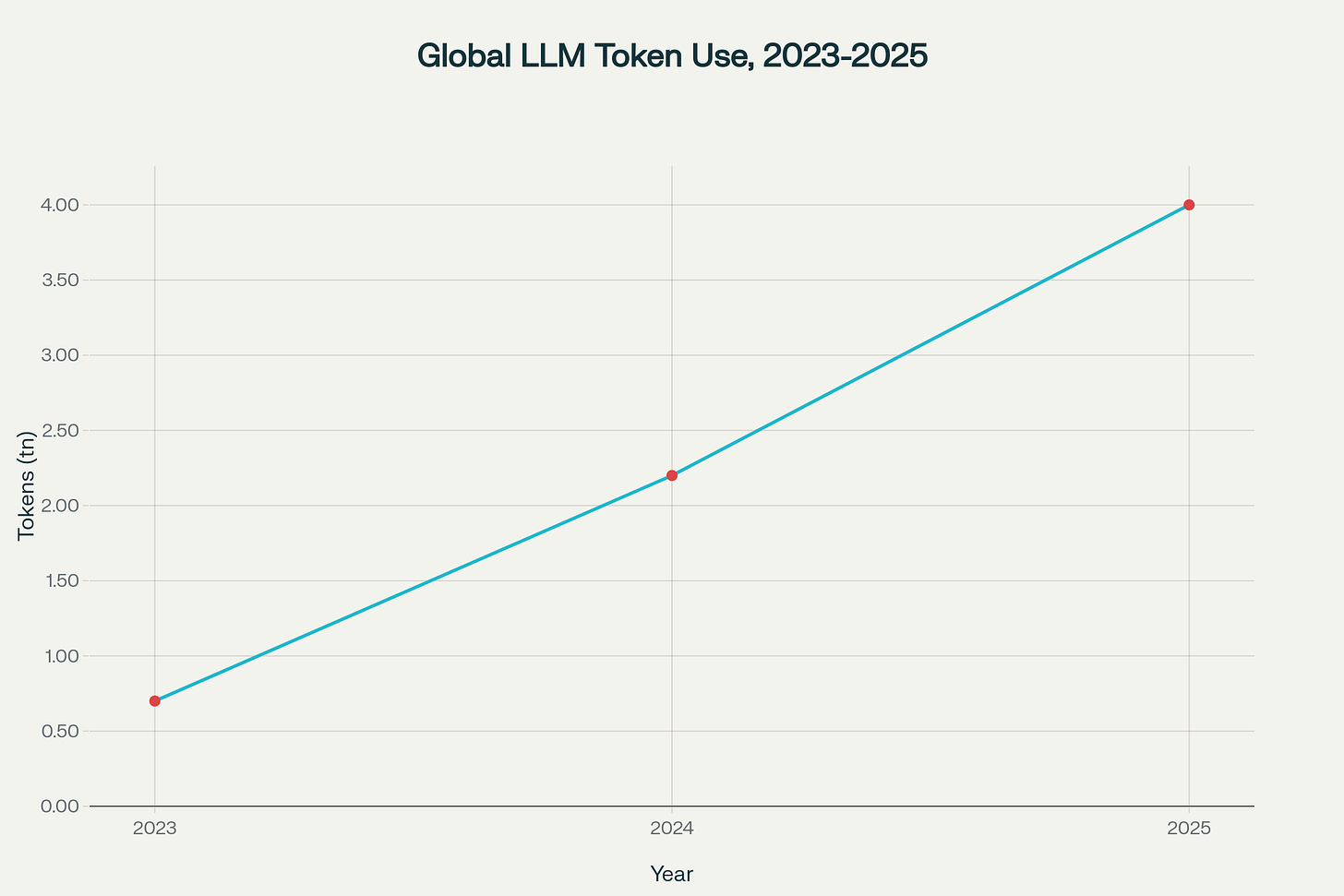

Being on the team handling inference compute at Azure OpenAI, I had the privilege of being on the frontline handling the sheer volume that inference drives, which is growing at rocket speed, not by months but by days. We are only crawling into the inference landscape in my mind.

Inference has a fundamentally different characteristic than training: it is elastic. Training is a one-shot event where you scale up until the model converges. Inference, by contrast, happens query by query, workflow by workflow. Each request can be cheap or expensive depending on how much “thinking time” you allow the model.

This is what we mean by test-time compute scaling: deciding at runtime how much computation to spend per query.

That elasticity creates a central tension every infra team now feels: latency vs reliability vs cost. Push for low latency and you risk brittle, hallucinated outputs.

Push for reliability and you must tolerate higher token counts, retries, or parallel search.

Push for cost efficiency and you need clever policies that dynamically adapt compute to the hardness of the query.

Balancing these forces is quickly becoming as important as training breakthroughs themselves.

Estimated global token usage by LLMs: 2023-2025 (in trillions of tokens)

What is Test-Time Compute Scaling?

At its core, test-time compute scaling is about spending more computational effort during inference to get a better answer. Instead of firing off a single forward pass and taking the first decoded response, we allow the model to “think longer” by exploring multiple reasoning paths, searching, verifying, and then selecting the most reliable outcome. Crucially, none of this requires retraining the model, the weights remain frozen. What changes is the strategy we apply at inference?

It is almost a spectrum. On one end, you have the cheapest possible decode: one forward pass, greedy decoding, instant output. On the other end, you have heavyweight inference: dozens of parallel samples, beam search over reasoning chains, verifier models cross-checking results, even iterative self-repair loops.

The space in between is where most real-world applications will land, allocating compute dynamically based on query difficulty, user expectations, or system policy.

This makes test-time compute scaling a knob we can dial per request, contrary to training, where scale is fixed once you launch a run. This knob is becoming an essential infrastructure piece for anyone orchestrating production grade AI workflows.

AI workflows have evolved to have a large number of steps: retrieval, reasoning, code execution, summarization, routing between agents, and handoffs to humans or external APIs. In these workflows, errors are multiplicative(ref #1). A single weak step, a misparsed field, a hallucinated fact, a failed function call, can derail the entire DAG. The deeper the pipeline, the more fragile it becomes.

This is where test-time compute scaling becomes essential. By allocating more inference compute at the leaves of the workflow the points where correctness matters most one can catch failures before they cascade.

One of the underappreciated aspects of test-time compute scaling is that it maps perfectly onto parallel execution patterns we already know from distributed systems. Multi-sample voting, best-of-N reranking, tree-of-thoughts search, these aren’t sequential processes. They are inherently parallelizable.

That parallelism changes the character of test-time scaling. Instead of thinking of it as “retry until you get a good answer,” you can treat it as fanout followed by aggregation. Fire off ten reasoning paths in parallel, score them as they arrive, and stop early if a quorum emerges. Or run multiple candidates asynchronously, let verifiers score them on the side, and promote a winner as soon as confidence is high enough. This is the same pattern we’ve been using for years in web services (hedged requests, quorum reads in distributed databases, speculative execution in MapReduce), only now applied at the inference layer.

The unlock is that these techniques let us trade off latency against reliability without always paying the full sequential cost. From an infrastructure perspective, this means test-time scaling is not a modeling hack, it’s a workflow execution problem.

How you shard inference requests across GPUs, how you pipeline verifiers, how you detect stragglers, these become first-class concerns. Just as batch processing frameworks like Spark made parallel data transformations tractable, inference orchestrators will need to make parallel reasoning at test time a built-in capability.

So how do you go about implementing this, let’s get some intuition to get you started.

Multi-Sample + Majority Vote

The simplest baseline: ask the model the same question multiple times and see what answer comes up most often. This works because LLMs are stochastic when the same output emerges across independent samples, it’s usually more reliable.

answers = [model(query, temperature=0.7) for _ in range(K)]

final = most_common(answers)

Like asking five smart people the same question and going with the consensus.

Best-of-N with a Verifier

Here, instead of blindly voting, we score candidates against some verifier. The verifier might be another model, a set of heuristics, or even ground-truth tests (in coding tasks).

candidates = [model(query) for _ in range(N)]

scored = [(cand, verifier(cand)) for cand in candidates]

final = argmax(scored) # candidate with highest verifier scoreThink of it as generating multiple drafts, then letting an editor pick the best.

Search over Thoughts (Tree/Beam Search)

Rather than producing whole answers at once, generate reasoning step by step. Branch on partial outputs, prune weak paths, and expand promising ones.

frontier = [""]

for step in range(max_steps):

expanded = []

for partial in frontier:

continuations = model_step(query, partial, beam_width)

expanded.extend(continuations)

frontier = prune(expanded, top_k=beam_width)

final = best(frontier)Instead of one linear train of thought, imagine exploring multiple branches of reasoning and following the ones that “look right.”

Self-Critique and Repair Loops

Ask the model to check its own output and revise. This loop often catches simple arithmetic or logical slips.

draft = model(query)

for _ in range(max_iters):

critique = model(f"Critique this answer: {draft}")

if "no issues" in critique: break

draft = model(f"Revise based on critique: {draft}\\n{critique}")

final = draftWrite, proofread, revise repeat.

Tool-Augmented Inference

Bring in external checks: calculators for math, retrievers for grounding, unit tests for code.

draft = model(query)

if has_equations(draft):

result = external_calc(draft)

draft = inject_result(draft, result)

if not passes_tests(draft):

draft = repair(draft)Give the model instruments instead of trusting it to play by ear.

Each of these techniques scales with parallelism: more samples, more

branches, more verifier calls. And each one benefits from smart stop rules: don’t always burn the full budget, quit early when confidence is high.

The shift from training scale to inference scale is an answer to the determinism problem. Now we are no longer dependant on only bigger models for reliability. We can derive it from smarter orchestration at runtime, where parallelism, verifiers, and stop rules combine into systems that steer models toward trustworthy results.

Test-time compute scaling doesn’t make models smarter it makes the system around them smarter. And in the new era of AI, that’s where the real breakthroughs will come from.

What do you think?