How are intelligent systems redefining what's possible with large data?

Agents at Internet Scale data

The quiet revolution happening in AI infrastructure isn't just about faster models or cheaper compute. It's about scale transcendence, the moment when AI agents stop being tools that extend human capability and become systems that operate entirely beyond human comprehension and capacity.

This week, I want to walk you through a fascinating case study that perfectly illustrates this transition: WhatPeopleWant, an AI agent that processes Hacker News discussions at internet scale to uncover entrepreneurial opportunities. But more importantly, I want to show you how platforms like Exosphere are making this kind of superhuman data processing accessible to any developer.

The Scale Problem That Humans Can't Solve

Let's start with a thought experiment. Imagine you wanted to manually analyze every Hacker News comment posted in the last 24 hours to identify unmet market needs. Here's what you'd be up against:

~500 new posts per day across all categories

~15,000 comments generated daily across active threads

Nested conversation trees that can go 10+ levels deep

Real-time updates every few minutes as discussions evolve

Pattern recognition across thousands of simultaneous conversations

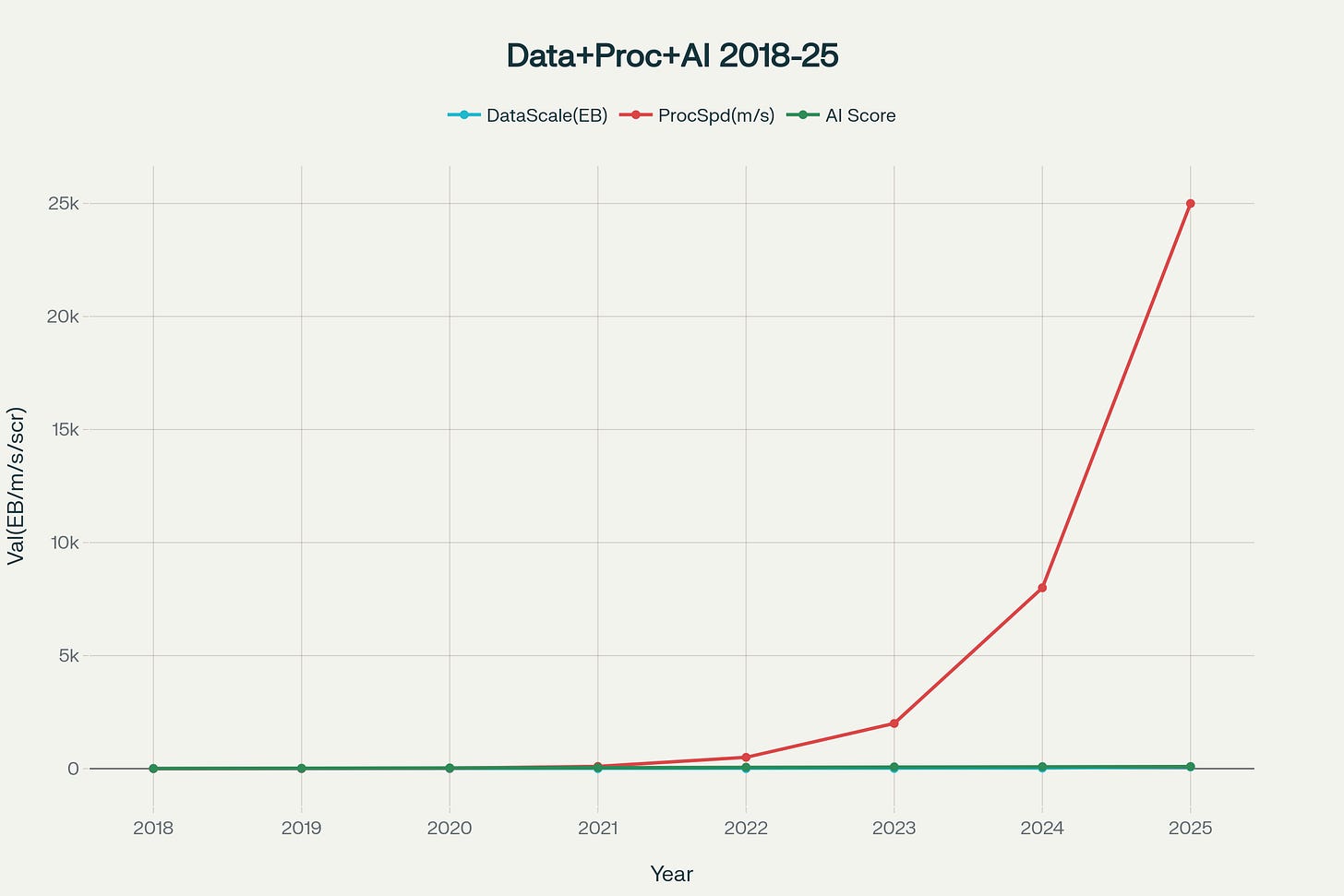

Evolution of Internet-Scale Data Processing: From Human-Scale to AI Agent-Scale Operations

A human analyst, working at superhuman speed, might process 100 comments per hour with decent comprehension. At that rate, analyzing a single day's worth of Hacker News data would take 150 hours—nearly a month of full-time work. By the time you finish, you'd have 29 days of new data waiting.

This is where internet-scale data processing fundamentally breaks human-centric workflows. The traditional approach of "humans + tools" hits a hard ceiling when data velocity exceeds human cognitive throughput by orders of magnitude.

The WhatPeopleWant project demonstrates a fundamentally different approach. Instead of augmenting human analysis, it replaces it entirely with an autonomous agent pipeline that operates at machine speed.

Let's dissect the architecture:

The Data Ingestion Layer

class GetMaxItemNode(BaseNode):

async def execute(self) -> Outputs:

async with ClientSession() as session:

async with session.get(MAX_ITEM_ENDPOINT) as response:

max_item = await response.json()

return self.Outputs(max_item=str(max_item))

The agent starts by hitting the Hacker News Firebase API to get the current maximum item ID, essentially the "water mark" for new content. This simple operation reveals something profound: the agent doesn't process data in human-friendly batches. It processes everything, continuously, as a streaming workflow.

Parallel Data Processing at Scale

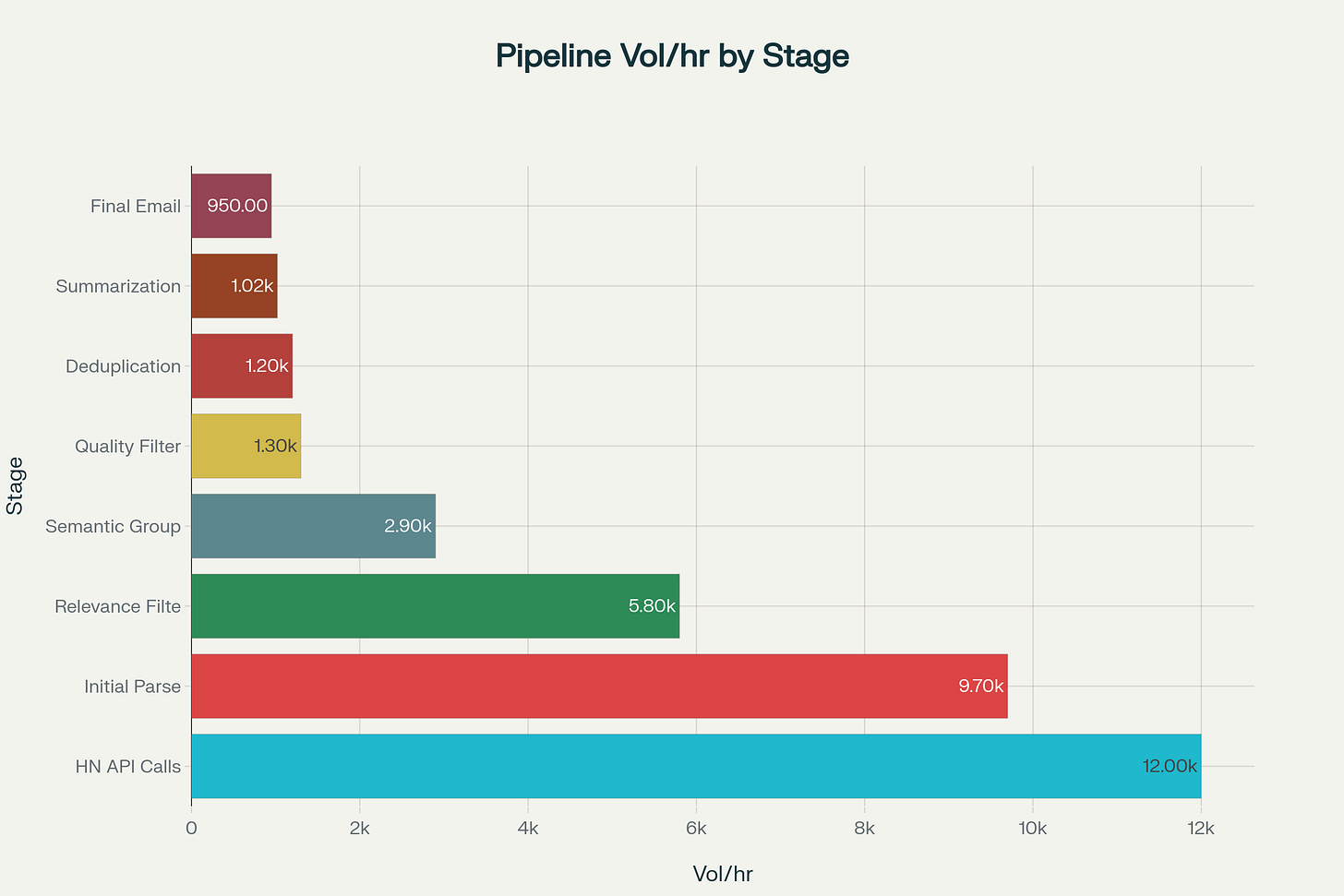

WhatPeopleWant Agent: Data Processing Pipeline Volume Analysis

The real magic happens in the GenerateItemsNode, which creates processing tasks for every single item ID in a range:

async def execute(self) -> list[Outputs]:

outputs = []

for item_id in range(int(self.inputs.start_id), int(self.inputs.end_id) + 1):

outputs.append(self.Outputs(item_id=str(item_id)))

return outputs

This isn't just batch processing it's dynamic parallelization. The agent spawns individual processing tasks for each piece of content, allowing Exosphere's runtime to distribute work across multiple containers automatically. A human would process items sequentially; the agent processes them as a massive parallel operation.

Graph-Based Relationship Mapping

The agent doesn't just read comments, it reconstructs entire conversation graphs, identifies discussion clusters, and spots trending topics based on engagement patterns.

Flowchart showing a LangGraph multi-agent system workflow orchestrating city-related data retrieval and analysis using various agents and APIs.

This type of multi-dimensional analysis would require a team of human analysts working with specialized tools for weeks.

The Exosphere Advantage: Infrastructure That Thinks

What makes this architecture possible isn't just clever code but the underlying platform. Exosphere provides three critical capabilities that transform this from an interesting prototype to a production-scale system:

1. Automatic Orchestration

With a simple deployment:

runner-1:

build: .

container_name: whatpeoplewant-runner-1

environment:

- EXOSPHERE_STATE_MANAGER_URI=http://exosphere-state-manager:8000

- EXOSPHERE_API_KEY=exosphere@123

# ... repeated for runner-2, runner-3, runner-4

The system automatically distributes work across four runner instances. When processing load increases, Exosphere can spawn additional runners dynamically. The developer doesn't manage containers, queues, or load balancing—the platform handles orchestration automatically.

2. Stateful Workflow Management

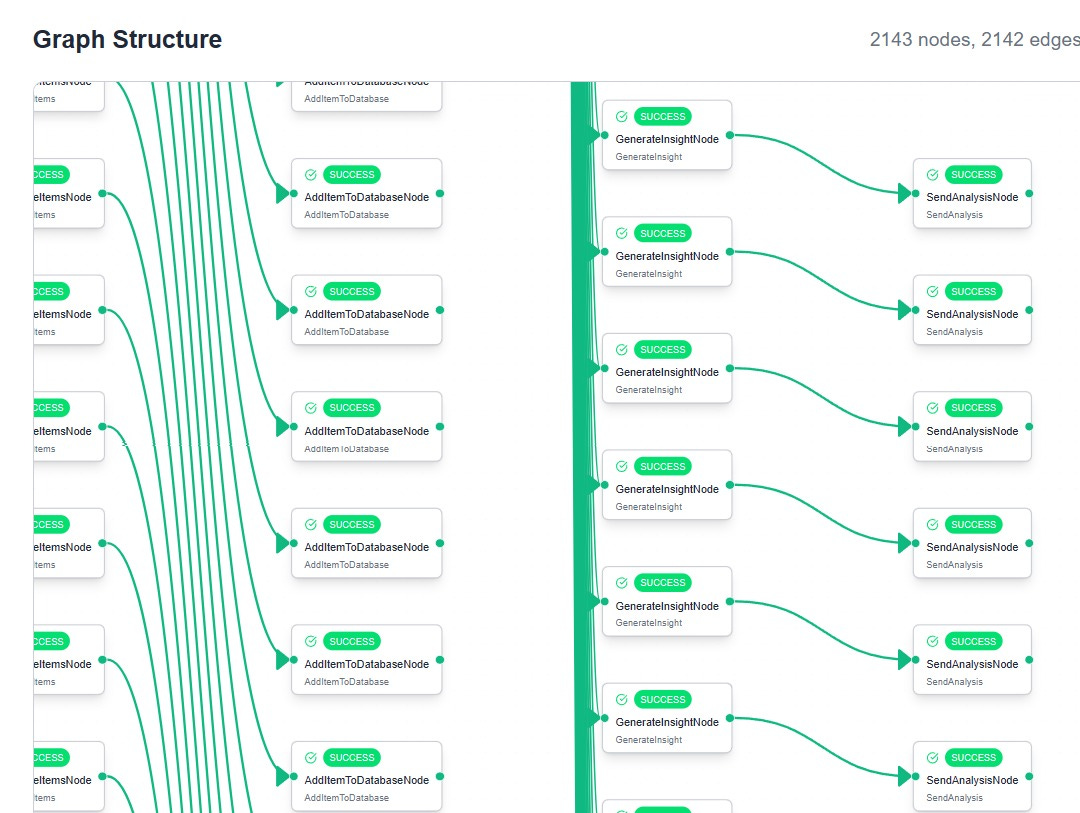

The register.py file defines a complex directed acyclic graph (DAG) of processing nodes:

graph_nodes=[

{

"node_name": GetMaxItemNode.__name__,

"identifier": "GetMaxItem",

"inputs": {},

"next_nodes": ["AddDatabasePointer"]

},

{

"node_name": AddDatabasePointerNode.__name__,

"identifier": "AddDatabasePointer",

"inputs": {"item_id": "${{GetMaxItem.outputs.max_item}}"},

"next_nodes": ["GenerateItems"]

}

# ... continues for 8+ nodes

]

Each node can fail, restart, or scale independently while maintaining state continuity. Traditional processing systems require complex error handling and checkpointing logic. Exosphere provides this as a platform primitive.

The MongoDB aggregation pipelines create temporal patterns:

Which types of problems get discussed repeatedly?

How does sentiment around specific technologies evolve?

Which conversation patterns predict successful product launches?

A human analyst might spot individual opportunities. The agent spots meta-patterns across thousands of conversations over months of operation. It develops institutional memory that no individual human could maintain

The Technical Architecture Deep Dive

Let's examine how Exosphere enables this kind of sophisticated workflow with remarkably simple code:

Node-Based Processing

Each processing step is implemented as a BaseNode with standardized inputs and outputs:

class AddItemToDatabaseNode(BaseNode):

class Inputs(BaseModel):

item_id: str

class Outputs(BaseModel):

object_id: str

async def execute(self) -> Outputs:

item_id = int(self.inputs.item_id)

item_data = await get_item_from_hacker_news(item_id)

object_id = await add_item_to_database(item_id, item_data)

return self.Outputs(object_id=object_id)

This abstraction allows complex workflows to be composed from simple, testable components. Each node can be developed, tested, and deployed independently while maintaining type safety through Pydantic models.

Distributed State Management

The platform maintains a centralized state manager that tracks workflow execution across all nodes:

StateManager(namespace="WhatPeopleWant").trigger(

graph_name="ScrapeYC",

state=TriggerState(

identifier="GetMaxItem",

inputs={}

)

)

This enables sophisticated workflow patterns like conditional branching, parallel execution, and automatic retry logic without requiring developers to implement distributed systems primitives.

Resource Optimization

The scheduler automatically batches work based on resource availability and deadline requirements. During high-load periods, it may delay non-critical processing. During low-load periods, it accelerates processing to stay ahead of schedule.

This dynamic resource allocation is invisible to the developer but critical for cost-effective internet-scale operation.

The Economics

Here's where the story gets really interesting. The WhatPeopleWant agent processes more data in an hour than a team of human analysts could handle in weeks. But it doesn't just process more, it processes differently

Traditional market research might involve:

Survey design and distribution

Response collection and validation

Statistical analysis and reporting

Weeks of human effort across multiple specialists

The agent approach involves:

Continuous data ingestion from public discussions

Real-time sentiment and pattern analysis

Automated insight generation and distribution

Zero ongoing human effort after initial setup

The unit economics are transformative. Instead of paying $10,000+ for a market research report that's outdated by publication, you get continuous intelligence for the cost of cloud compute—typically under $50/month for this type of workload.

What This Means for AI Infrastructure

The WhatPeopleWant project is a neat preview of how AI infrastructure will evolve. We're moving from platforms that help humans work faster to platforms that enable entirely non-human workflows.

Key architectural patterns emerging:

Agent-Native Design: Instead of building human-centric interfaces with API access, we're building agent-native platforms where the primary users are other AI systems.

Workflow Declarativity: Developers describe what they want accomplished, not how to accomplish it. The platform handles optimization, scaling, and reliability automatically.

Intelligence Integration: LLM capabilities are built into the infrastructure layer, not added as external services. This enables more sophisticated processing without complex integration overhead.

Scale Economics: The marginal cost of additional data processing approaches zero, enabling workflows that were previously economically impossible.

Platforms like Exosphere represent the beginning of a broader infrastructure shift. Today, we're still in the "human + AI tools" era for most applications. But projects like WhatPeopleWant show us what the "AI-native workflow" era looks like.

The implications are staggering:

Research and Analysis: Continuous monitoring and analysis of any topic across all available data sources

Business Intelligence: Real-time market sensing and competitive analysis that updates faster than human decision-making cycles

Content Creation: Automated generation of insights, reports, and creative content based on real-time data synthesis

System Optimization: Self-managing infrastructure that optimizes performance, cost, and reliability without human intervention

We're not just building better tools for humans. We're building the foundation for a new class of applications that operate entirely beyond human scale and comprehension.

The next time someone asks you about AI infrastructure, don't just think about faster GPUs or cheaper inference. Think about platforms that enable entirely superhuman workflows. Because that's where the real transformation is happening.

Want to explore building your own internet-scale AI workflows? Check out the WhatPeopleWant repository and Exosphere's platform to get started. The future is autonomous, and it's arriving faster than most people realize.